Upload Folders to S3 Using Aws Cli

Introduction

Amazon Web Service, aka AWS, is a leading cloud infrastructure provider for storing your servers, applications, databases, networking, domain controllers, and active directories in a widespread cloud architecture. AWS provides a Simple Storage Service (S3) for storing your objects or data with (119's) of information durability. AWS S3 is compliant with PCI-DSS, HIPAA/HITECH, FedRAMP, EU Data Protection Directive, and FISMA that helps satisfy regulatory requirements.

When you log in to the AWS portal, navigate to the S3 saucepan, choose your required bucket, and download or upload the files. Doing it manually on the portal is quite a time-consuming chore. Instead, you can employ the AWS Command Line Interface (CLI) that works best for majority file operations with easy-to-apply scripts. You tin can schedule the execution of these scripts for an unattended object download/upload.

Configure AWS CLI

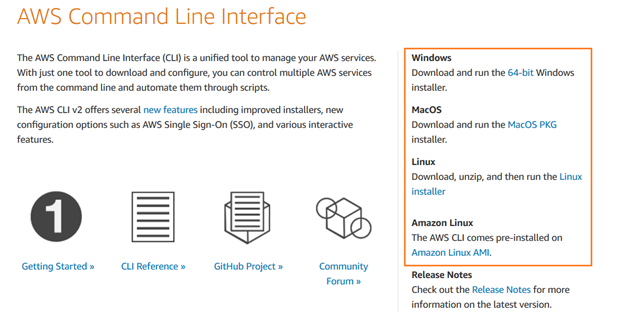

Download the AWS CLI and install AWS Command Line Interface V2 on Windows, macOS, or Linux operating systems.

You lot can follow the installation magician for a quick setup.

Create an IAM user

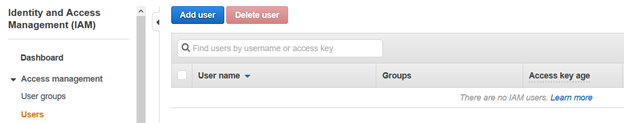

To access the AWS S3 saucepan using the command line interface, we need to set up an IAM user. In the AWS portal, navigate to Identity and Access Management (IAM) and click Add User .

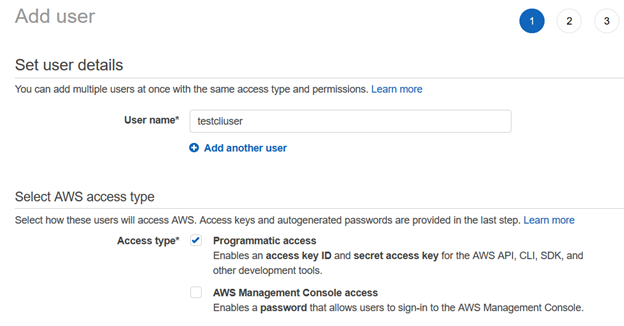

In the Add User folio, enter the username and admission type equally Programmatic access.

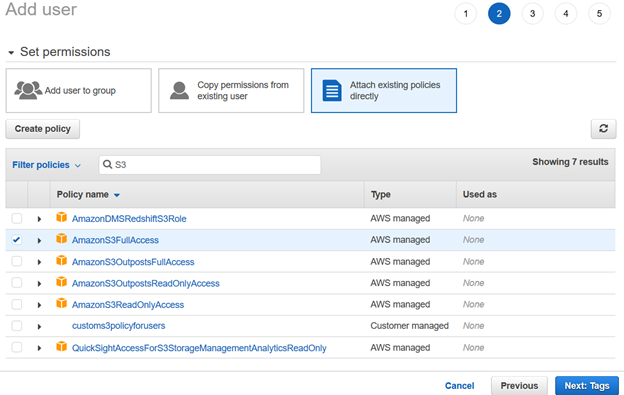

Next, we provide permissions to the IAM user using existing policies. For this article, we have chosen [AmazonS3FullAccess] from the AWS managed policies.

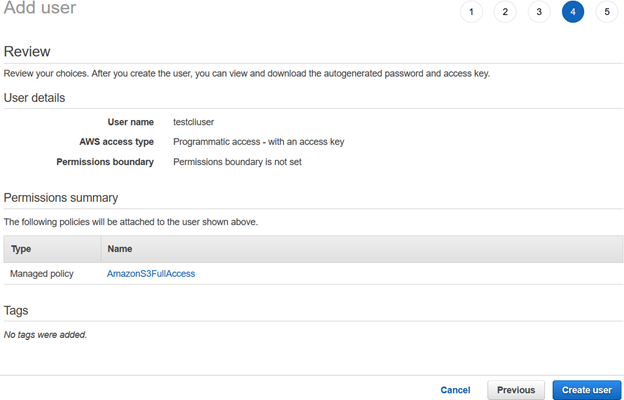

Review your IAM user configuration and click Create user .

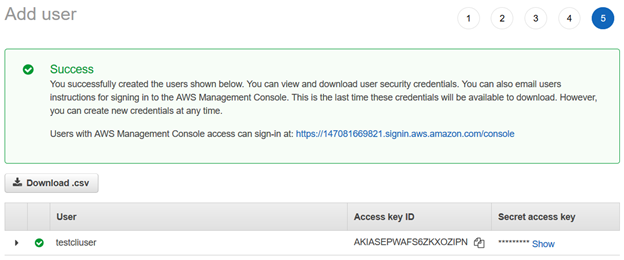

Once the AWS IAM user is created, it gives the Access Primal ID and Underground access fundamental to connect using the AWS CLI.

Note : Yous should copy and save these credentials. AWS does not permit you lot to retrieve them at a afterwards phase.

Configure AWS Profile On Your Reckoner

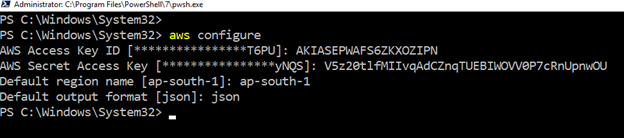

To piece of work with AWS CLI on Amazon spider web service resources, launch the PowerShell and run the following command.

>aws configure It requires the following user inputs:

- IAM user Access Key ID

- AWS Secret Admission primal

- Default AWS region-name

- Default output format

Create S3 Bucket Using AWS CLI

To shop the files or objects, we need an S3 bucket. We tin can create information technology using both the AWS portal and AWS CLI.

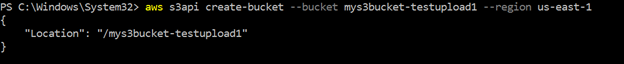

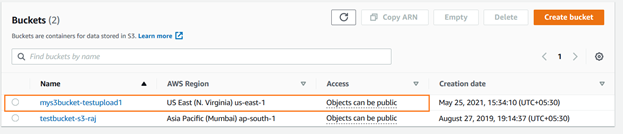

The following CLI command creates a bucket named [mys3bucket-testupload1] in the us-e-1 region. The query returns the bucket name in the output, as shown below.

>aws s3api create-bucket --bucket mys3bucket-testupload1 --region us-east-ane

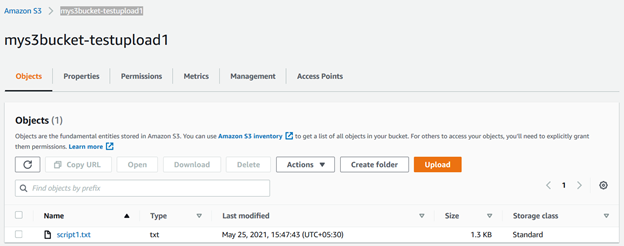

You tin can verify the newly-created s3 saucepan using the AWS console. As shown below, the [mys3bucket-testupload1] is uploaded in the United states Due east (Due north. Virginia).

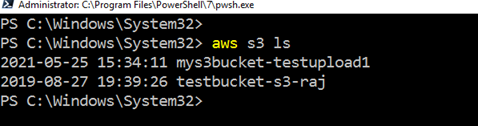

To list the existing S3 bucket using AWS CLI, run the command – aws s3 ls

Uploading Objects in the S3 Saucepan Using AWS CLI

Nosotros can upload a unmarried file or multiple files together in the AWS S3 bucket using the AWS CLI control. Suppose we accept a unmarried file to upload. The file is stored locally in the C:\S3Files with the proper name script1.txt.

To upload the unmarried file, use the following CLI script.

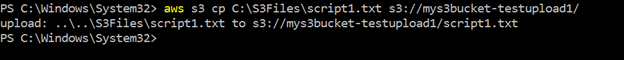

>aws s3 cp C:\S3Files\Script1.txt s3://mys3bucket-testupload1/ It uploads the file and returns the source-destination file paths in the output:

Note: The time to upload on the S3 saucepan depends on the file size and the network bandwidth. For the demo purpose, I used a small file of a few KBs.

You tin refresh the s3 bucket [mys3bucket-testupload1] and view the file stored in it.

Similarly, we can utilize the same CLI script with a slight modification. Information technology uploads all files from the source to the destination S3 bucket. Hither, we utilise the parameter –recursive for uploading multiple files together:

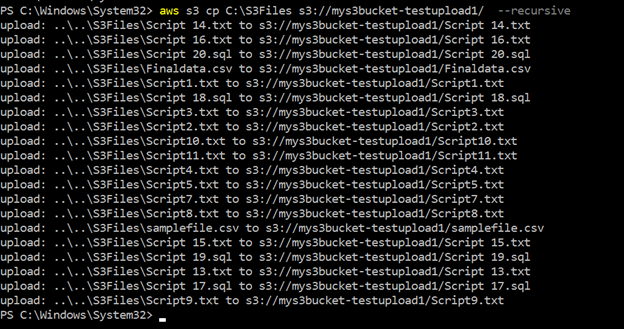

>aws s3 cp c:\s3files s3://mys3bucket-testupload1/ --recursive As shown below, it uploads all files stored inside the local directory c:\S3Files to the S3 bucket. You lot get the progress of each upload in the console.

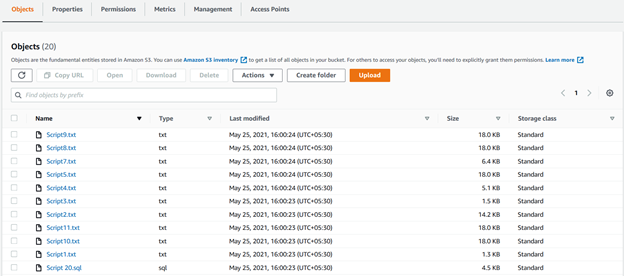

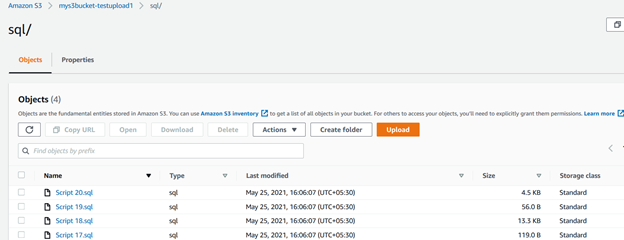

We can see all uploaded files using recursive parameters in the S3 bucket in the post-obit figure:

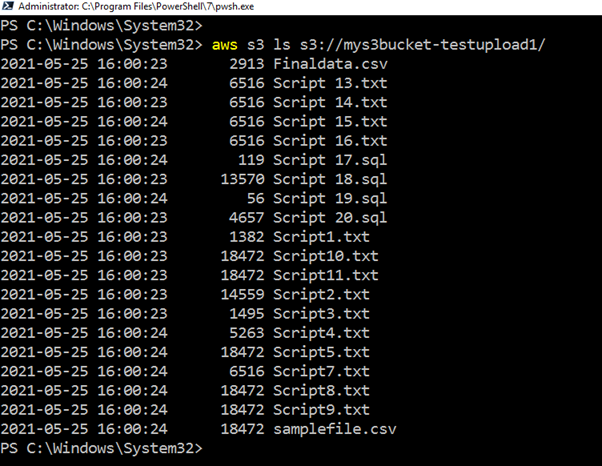

If you do non want to go to the AWS portal to verify the uploaded list, run the CLI script, return all files, and upload timestamps.

>aws s3 ls s3://mys3bucket-testupload1

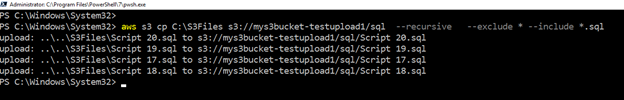

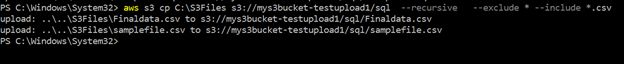

Suppose we want to upload only files with a specific extension into the separate folder of AWS S3. Y'all can do the object filtering using the CLI script as well. For this purpose, the script uses include and exclude keywords.

For example, the query below checks files in the source directory (c:\s3bucket), filters files with .sql extension, and uploads them into SQL/ folder of the S3 bucket. Here, we specified the extension using the include keyword:

>aws s3 cp C:\S3Files s3://mys3bucket-testupload1/ --recursive --exclude * --include *.sql In the script output, you tin verify that files with the .sql extensions only were uploaded.

Similarly, the below script uploads files with the .csv extension into the S3 bucket.

>aws s3 cp C:\S3Files s3://mys3bucket-testupload1/ --recursive --exclude * --include *.csv

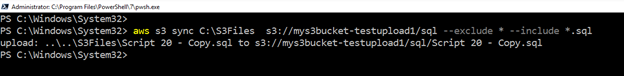

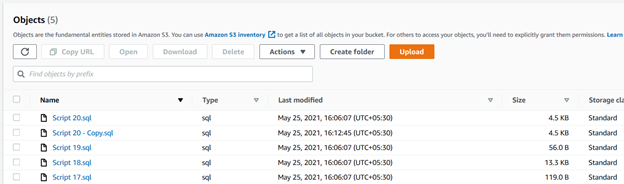

Upload New or Modified Files from Source Folder to S3 Bucket

Suppose you use an S3 saucepan to move your database transaction log backups.

For this purpose, we use the sync keyword. It recursively copies new, modified files from the source directory to the destination s3 bucket.

>aws s3 sync C:\S3Files s3://mys3bucket-testupload1/ --recursive --exclude * --include *.sql Equally shown below, information technology uploaded a file that was absent-minded in the s3 bucket. Similarly, if you change any existing file in the source binder, the CLI script volition pick information technology and upload information technology to the S3 bucket.

Summary

The AWS CLI script can make your piece of work easier for storing files in the S3 bucket. You can use information technology to upload or synchronize files between local folders and the S3 saucepan. It is a quick way to deploy and work with objects in the AWS cloud.

(Visited eight,359 times, 102 visits today)

Tags: AWS, aws cli, aws s3, cloud platform Last modified: September 16, 2021

altcamortautley53.blogspot.com

Source: https://codingsight.com/upload-files-to-aws-s3-with-the-aws-cli/

0 Response to "Upload Folders to S3 Using Aws Cli"

Post a Comment